Princeton University is developing an automated radar system that will detect and alert drivers and riders of oncoming traffic and pedestrians around blind corners.

Professor Felix Heide,an assistant professor of computer science at the uni, says the system has ramifications for the safety of motorcyclists.

“We have already tested bicyclists successfully, so motorcyclists and scooter riders will also be easy to detect by our system,” he says.

The system has been tested successfully in cars, but could also be used in motorcycles.

“It would certainly be suited to be installed on motorcycles as well,” he says.

The system could be useful fr detecting a gaggle of cyclists just up around that next blind corner on your Sunday morning ride!

It’s not the first system for detecting smaller and more vulnerable rad users such as riders fo bicycles, motorcycles and scooters.

Volvo has developed technology that alerts drivers of cyclists and Jaguar is working on technology that makes A pillars “invisible” so drivers can see smaller road users such as riders.

While we applaud such technology, my concern is that drivers will become reliant on such technology and look for riders even less.

There is also the concern that the tech will fail.

Radar for blind corners

In the Princeton study, researchers combined artificial intelligence and radar.

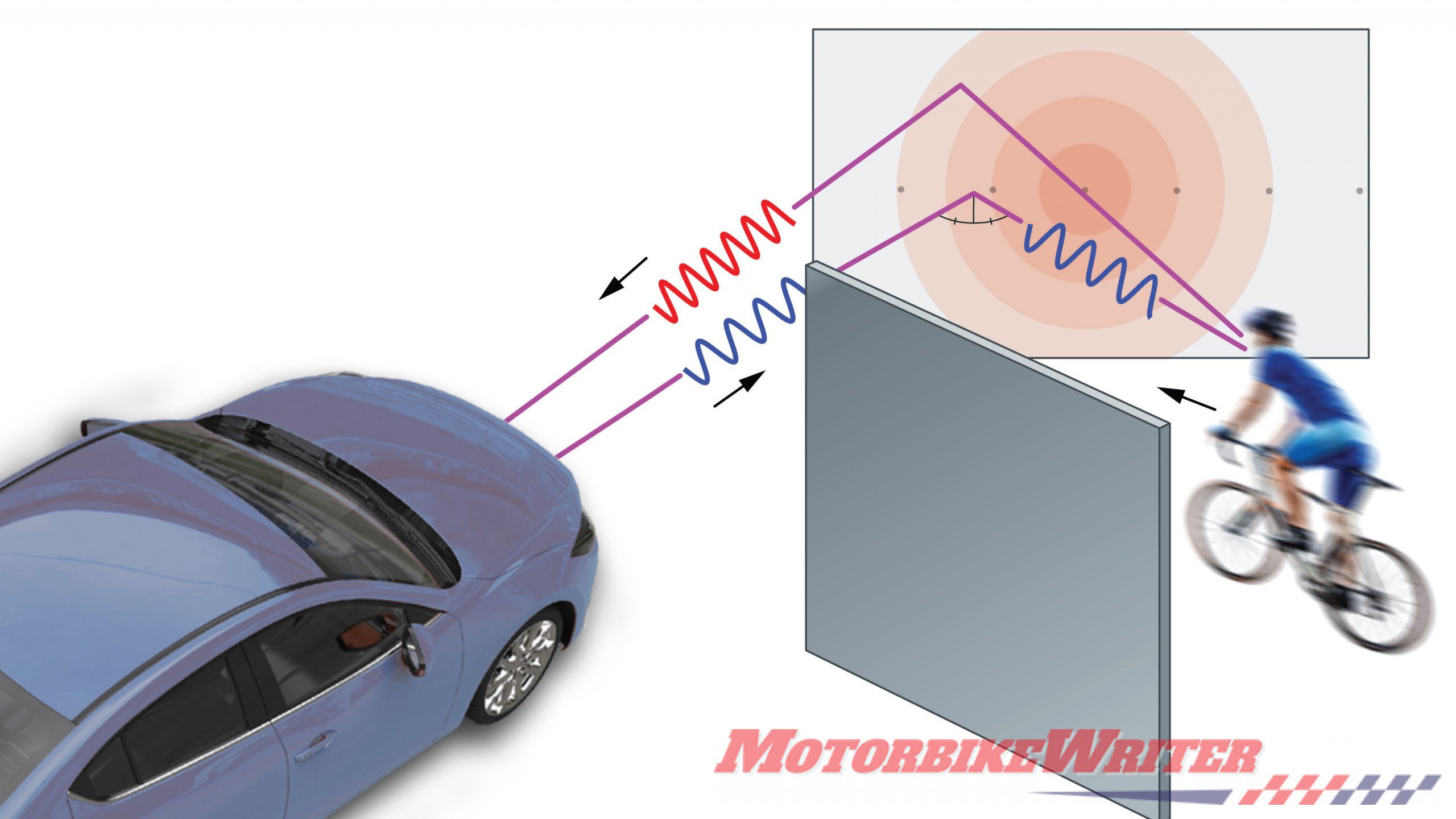

The system uses Doppler radar to bounce radio waves off surfaces such as buildings and parked vehicles.

The radar signal hits the surface at an angle, so its reflection rebounds off like a cue ball hitting the wall of a pool table. The signal goes on to strike objects hidden around the corner.

Some of the radar signal bounces back to detectors mounted on the car or motorcycle, allowing the system to see objects around the corner and tell whether they are moving or stationary.

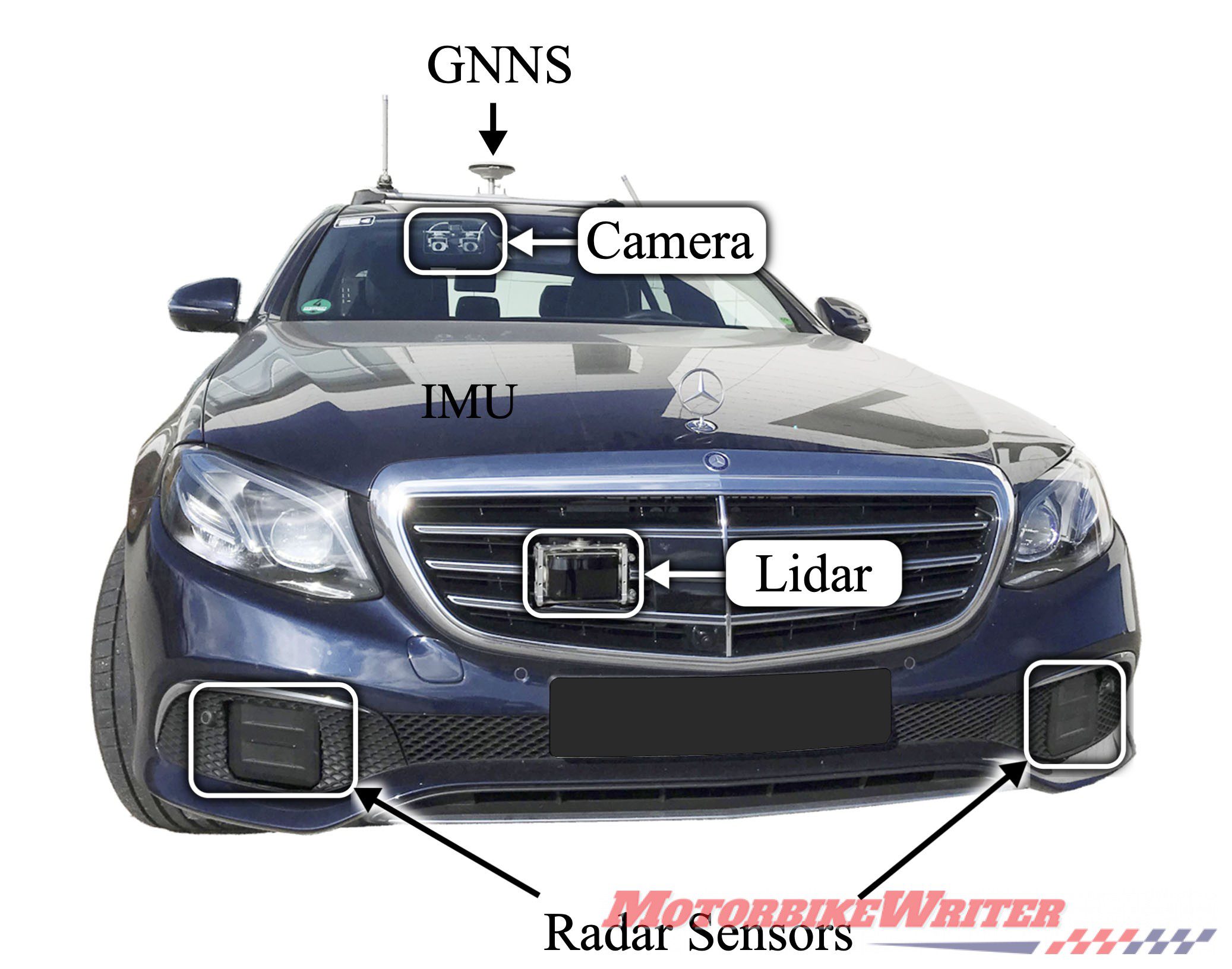

“This will enable cars to see occluded objects that today’s lidar and camera sensors cannot record, for example, allowing a self-driving vehicle to see around a dangerous intersection,” says Felix.

“The radar sensors are also relatively low-cost, especially compared to lidar sensors, and scale to mass production.”

In a paper presented June 16 at this Conference on Computer Vision and Pattern Recognition (CVPR), the researchers described how the system is able to distinguish objects including cars, bicyclists and pedestrians and gauge their direction and oncoming speed.

“The proposed approach allows for collision warning for pedestrians and cyclists in real-world autonomous driving scenarios — before seeing them with existing direct line-of-sight sensors,” the authors write.

In recent years, engineers have developed a variety of sensor systems that allow cars to detect other objects on the road. Many of them rely on lidar or cameras using visible or near-infrared light, and such sensors preventing collisions are now common on modern cars. But optical sensing is difficult to use to spot items out of the car’s line of sight. In earlier research, Felix’s team has used light to see objects hidden around corners. But those efforts currently are not practical for use in cars both because they require high-powered lasers and are restricted to short ranges.

In conducting that earlier research, Felix and his colleagues wondered whether it would be possible to create a system to detect hazards out of the car’s line of sight using imaging radar instead of visible light. The signal loss at smooth surfaces is much lower for radar systems, and radar is a proven technology for tracking objects.

The challenge is that radar’s spatial resolution — used for picturing objects around corners such as cars and bikes — is relatively low. However, the researchers believed that they could create algorithms to interpret the radar data to allow the sensors to function.

“The algorithms that we developed are highly efficient and fit on current generation automotive hardware systems,” Felix says. “So, you might see this technology already in the next generation of vehicles.”

To allow the system to distinguish objects, Felix’s team processed part of the radar signal that standard radars consider background noise rather than usable information. The team applied artificial intelligence techniques to refine the processing and read the images.

Recognising small road users

Fangyin Wei, a graduate student in computer science and one of the paper’s lead authors, says the computer running the system had to learn to recognise cyclists and pedestrians from a very sparse amount of data.

“First we have to detect if something is there. If there is something there, is it important? Is it a cyclist or a pedestrian?” she says. “Then we have to locate it.”

Fangyin says the system currently detects pedestrians and cyclists because the engineers felt those were the most challenging objects because of their small size and varied shape and motion. She says the system could be adjusted to detect vehicles as well, which would include motorcycles and scooters.

The researchers plan to follow the research in a number of directions for applications involving both radar and refinements in signal processing.

The paper’s authors also include: Jürgen Dickmann, Florian Krause, Werner Ritter, and Nicolas Schiener of Mercedes-Benz AG; Buu Phan and Fahim Mannan of Algolux; Klaus Dietmayer of Ulm University; and Bernard Sick of the University of Kassel. Support for the research was provided in part by the European Union’s H2020 ECSEL program